Deep Learning Neural Networks

AI-assisted crystal structure analysis

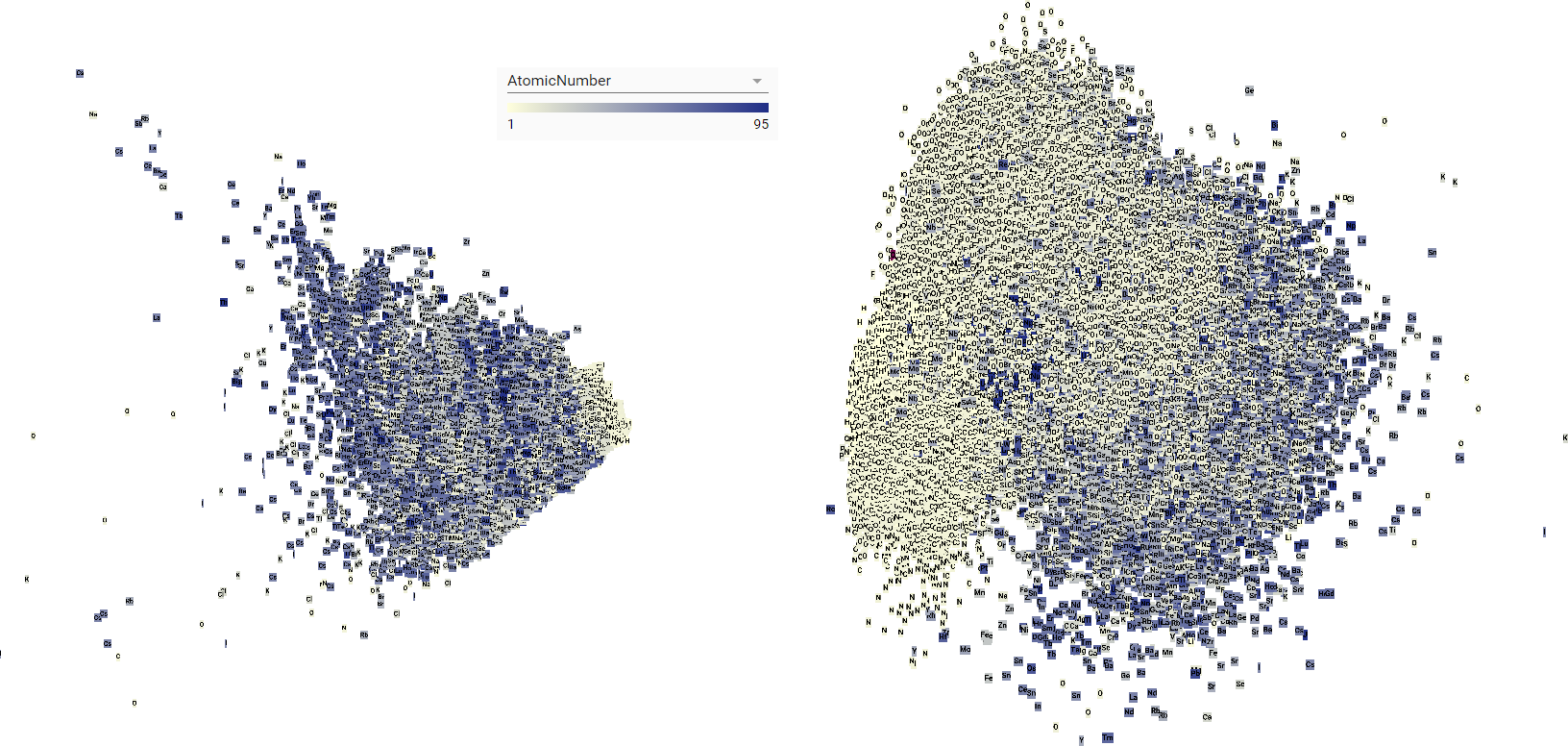

Figure 1. 2D representation produced using principal component analysis of topological description (2000D normalized atomic fingerprint)(left) and learned representation (256D) (right). The increased variation of nonmetals like oxygen and carbon (white squares) in the learned representation (right) is more consistent with our chemical understanding of atoms.

The development of effective machine learning algorithms depends heavily on the input representation.1 An ideal representation discards all irrelevant information, retains important information (features) relevant to a specific task, and disentangles factors of variation.1 A significant portion of the time and effort during model development is spent on the design of useful features.1-4 Deep neural networks afford integration of feature design into the machine learing process, thus facilitating the rapid development of models with state-of-the-art performance in a wide variety of applications.1,4

We investigate a neural network model which predicts a chemical element on a crystallographic site using a topological description, specifically the radial distribution of surrounding atoms (purely geometric information). This model is later used to rapidly identify promising crystal structures in a prediction algorithm. We observe evidence that the autoencoder has learned to transform the topological description it is given into a representation which better reflects conceptual variation (Figure 1.)

References

- 1. Bengio, Y.; Courville, A.; Vincent, P. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35 (8), 1798–1828.

- 2. Bart, A. P. Phys. Rev. B 2013, 87 (18), 1–16.

- 3. Isayev, O.; Fourches, D.; Muratov, E. N.; Oses, C.; Rasch, K.; Tropsha, A.; Curtarolo, S. Chem. Mater. 2015, 27 (3), 735–743.

- 4. Lecun, Y.; Bengio, Y.; Hinton, G. Nature 2015, 521 (7553), 436–444.